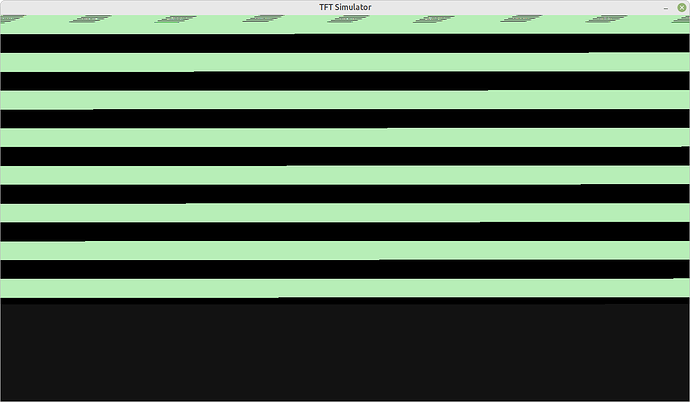

Incorrect rendering occurs with the SDL backend. A single button is supposed to be visible in the window. Instead some green and black stripes are rendered in the window:

Colour depth has been set to 32 (target device is a PC). Window resolution is set to 1024x768.

Hmm, looks like a missmatch of the used resolutions.

lvg set to 640 x 480? And display size on simulator set to 1024 x 768?

How is the resolution setup with the SDL backend?

Both hor_res and ver_res fields are set on lv_disp_drv_t. This should be enough to change the resolution however the SDL backend (via LV Drivers 8.3) completely ignores the changes. Appears as though the backend has a bug where changing the resolution via lv_disp_drv_t doesn’t result in the window size changing to match the resolution.

By SDL backed do you mean SDL GPU rendering (enabled here) or normal software rendering with SDL.

If you mean SDL GPU rendering you can use sdl_display_resize.

For SW rendering I think it’s really not implemented now but I can add it if needed.

Software rendering with SDL. An older version of LV Drivers (8.0) is used due to a regression with display rendering in the newer versions. Unfortunately GPU rendering isn’t an option with LV Drivers 8.0, otherwise I would try it out and see if the window resizing works.

What was that regression? It’d be great to fix because I’d like to add this new function to the latest version.

On the master branch is the working version (uses LVGL 8.0.2). Display rendering with the SDL backend has regressed (no UI is visible, just garbage displayed in the window). The regression can be found with the lvgl-8.3 branch.

Does it work if you set this in lv_conf.h?

#define LV_TICK_CUSTOM 1

#if LV_TICK_CUSTOM

#define LV_TICK_CUSTOM_INCLUDE <SDL2/SDL.h> /*Header for the system time function*/

#define LV_TICK_CUSTOM_SYS_TIME_EXPR SDL_GetTicks() /*Expression evaluating to current system time in ms*/

#endif /*LV_TICK_CUSTOM*/

After applying the changes (at the library file level) the same result occurs. Changing the library configuration has no effect, although if the LV_TICK_CUSTOM macro is defined in build.gradle.kts, and the cinterop Gradle task is run then the lv_tick_inc function can no longer be used in a Kotlin source file (has the kt file extension; Kotlin script files use the kts file extension).

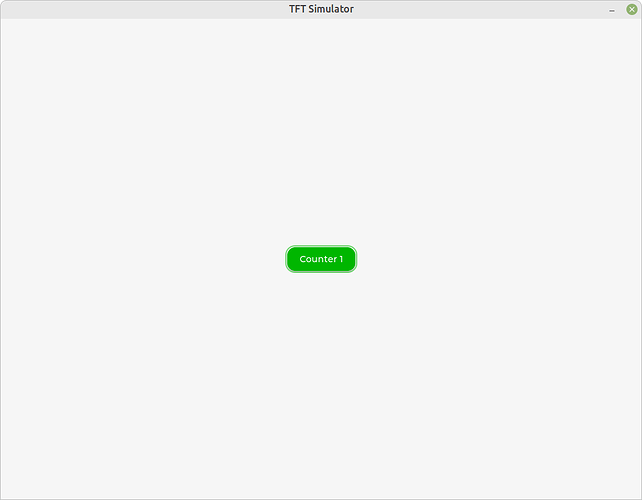

Incorrect library configuration has struck again. Managed to get caught out with using the wrong version of one of the configuration files. After using the correct configuration (for generating the static library files), and using updated versions of the static library files the rendering now works correctly  :

:

It would be great if there was an option to use KConf to generate the library configuration, and the static library file(s) in a single step (via an NCurses style UI), without having to mess around with configuration files and deal directly with cmake.

Note that, LVGL doesn’t ship any Kconfig “backends”. So you can’t configure LVGL on it’s own with Kconfig, but it can be integrated into a framework, where Kconfig is used.

We have discussion about a similar Kconfig related here. Feel free to join.