Description

I am trying to display images when LV_COLOR_DEPTH is 1 and I’m running into issues. I have been able to successfully do this without LVGL so I’d assume it’s possible to do it with this library.

What MCU/Processor/Board and compiler are you using?

All the examples are using the SDL simulator with VS Code on Ubunut 20.04, but my ultimate goal is to control the GDEW042T2 e paper display with a Raspberry Pi 4.

What LVGL version are you using?

Version 8.0.0 for the simulator. Version 8.3.8 on the Raspberry Pi 4 and I’m getting the same results.

What do you want to achieve?

I want to display images on a e paper display during runtime eventually, but currently I’m hitting roadblocks trying to do it with compiled data.

What have you tried so far?

I cloned the VS Code simulator built and ran the code without a problem with LV_COLOR_DEPTH set to 32. I set color depth to 1 in lv_conf.h by changing line 24 the following way.

-#define LV_COLOR_DEPTH 32

+#define LV_COLOR_DEPTH 1

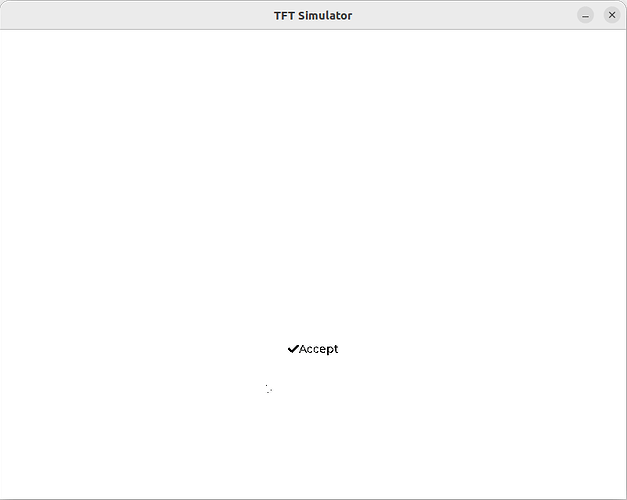

I used lv_example_img_1 on both the simulator and the final hardware and the image does not show, but the image label and accompanying text does. Pictures below

Code to reproduce

I used the provided image example code. Not copy pasting to save you from scrolling.

On the final hardware, I am doing bit packing to properly render graphics

#define DISP_HOR_RES 400

#define DISP_VER_RES 300

#define EPD_ROW_LEN (DISP_HOR_RES / 8u)

#define BIT_SET(a, b) ((a) |= (1U << (b)))

#define BIT_CLEAR(a, b) ((a) &= ~(1U << (b)))

/* omitted irrelevant code */

void my_set_px_cb(lv_disp_drv_t * disp_drv, uint8_t * buf, lv_coord_t buf_w, lv_coord_t x, lv_coord_t y, lv_color_t color, lv_opa_t opa)

{

uint16_t byte_index = (x >> 3u) + (y * EPD_ROW_LEN);

uint8_t bit_index = x & 0x07u;

if (color.full) {

BIT_SET(buf[byte_index], 7 - bit_index);

} else {

BIT_CLEAR(buf[byte_index], 7 - bit_index);

}

}

This renders other examples, for example labels, as expected but images aren’t working. I thought I had to use a monochrome theme but I believe that’s already enabled in line 489 of lv_conf.h

#define LV_USE_THEME_MONO 1

Screenshot and/or video

With LV_COLOR_DEPTH set to 32 the simulator works perfectly.

Things get weird with LV_COLOR_DEPTH set to 1

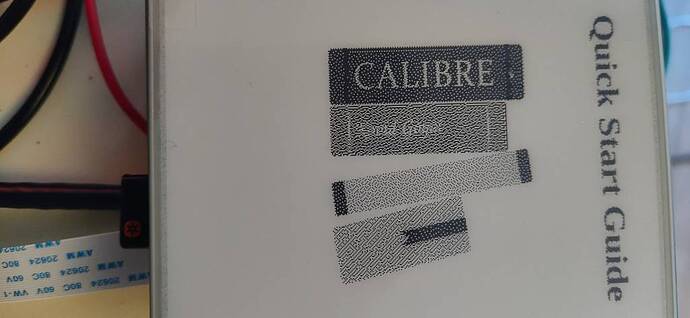

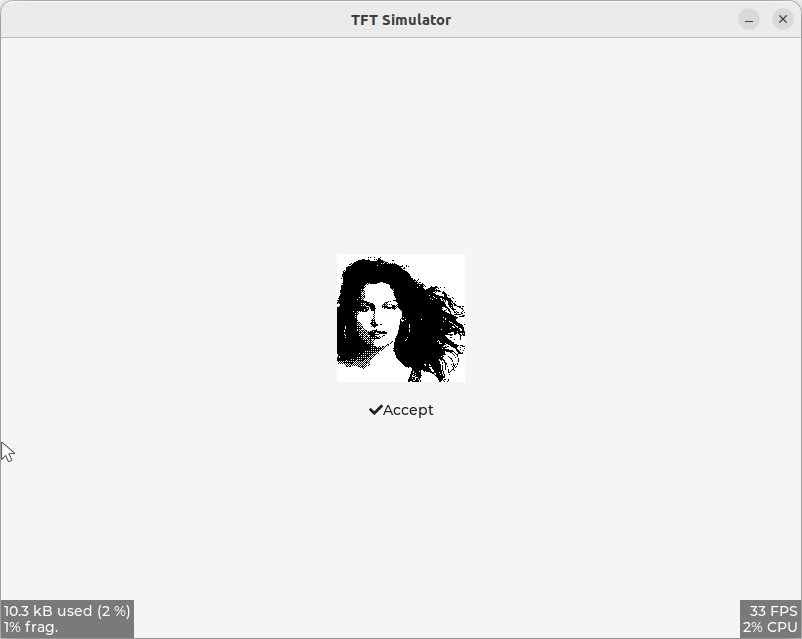

Any tips? Again, without LVGL the final hardware can display images. The screenshot below was taken with handwritten code provided by the vendor displaying an image produced at runtime. I’ve read through the code and it appears to only be using black and white pixel dots, no grayscale and I did not find any fancy image processing. The specific code is here: https://github.com/waveshareteam/e-Paper/blob/master/RaspberryPi_JetsonNano/c/lib/e-Paper/EPD_4in2.c#L585