Description

I use FRDM-K32L3A6 board. I run the library with SPI Polling successfully. I can also run with DMA but I need to wait for flushing to finish.

What MCU/Processor/Board and compiler are you using?

FRDM-K32L3A6 Board, MCUXpresso

What LVGL version are you using?

7.7

What do you want to achieve?

Fully DMA without waiting in while();

What have you tried so far?

Code to reproduce

Add a code snippet which can run in the simulator. It should contain only the relevant code that compiles without errors when separated from your main code base.

With the following code, it works:

void my_flush_cb(lv_disp_drv_t * disp_drv, const lv_area_t * area, lv_color_t * color_p)

{

/*put all pixels to the screen at once

* Can be done by DMA as well.

*

* */

// disp_p = disp_drv;

uint16_t * color;

color = (uint16_t*) color_p;

lcdDrawMultiPixels(area->x1,area->y1,area->x2,area->y2, color);

/* IMPORTANT!!!

* Inform the graphics library that you are ready with the flushing*/

while(!isTransferCompleted);

lv_disp_flush_ready(disp_drv);

}

However, if I put flushing inside the SPI Callback, the screen is not correctly filled. I assume that the buffers are get mixed:

void LPSPI_MasterUserCallback(LPSPI_Type *base, lpspi_master_edma_handle_t *handle, status_t status, void *userData)

{

if(lcdInitDone)

lv_disp_flush_ready(&disp_drv);

isTransferCompleted = true;

}

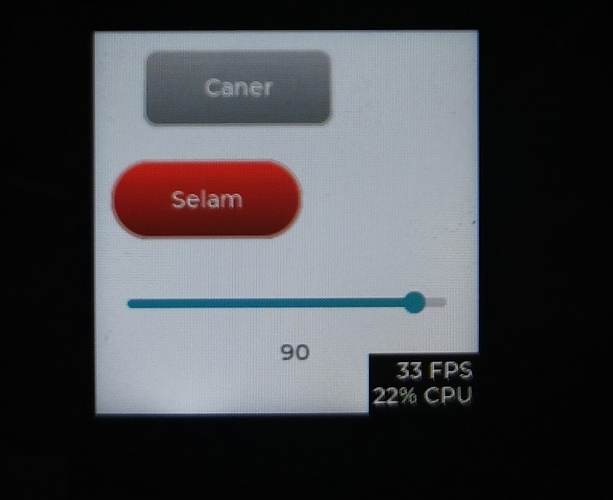

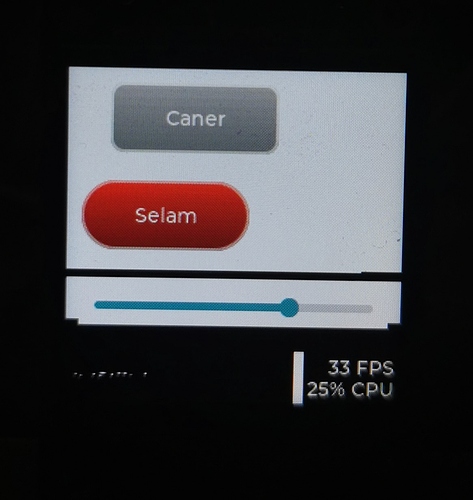

Screenshot and/or video

If possible, add screenshots and/or videos about the current state.